Demonstration

Introduction

Voice-controlled systems are becoming critical in both consumer and industrial domains. Yet, most speech detection systems are designed for cloud environments and struggle on low-power edge devices. This project tackles this limitation by enabling real-time, accurate speech detection directly on embedded hardware, improving offline usability, latency, and privacy.

Methods

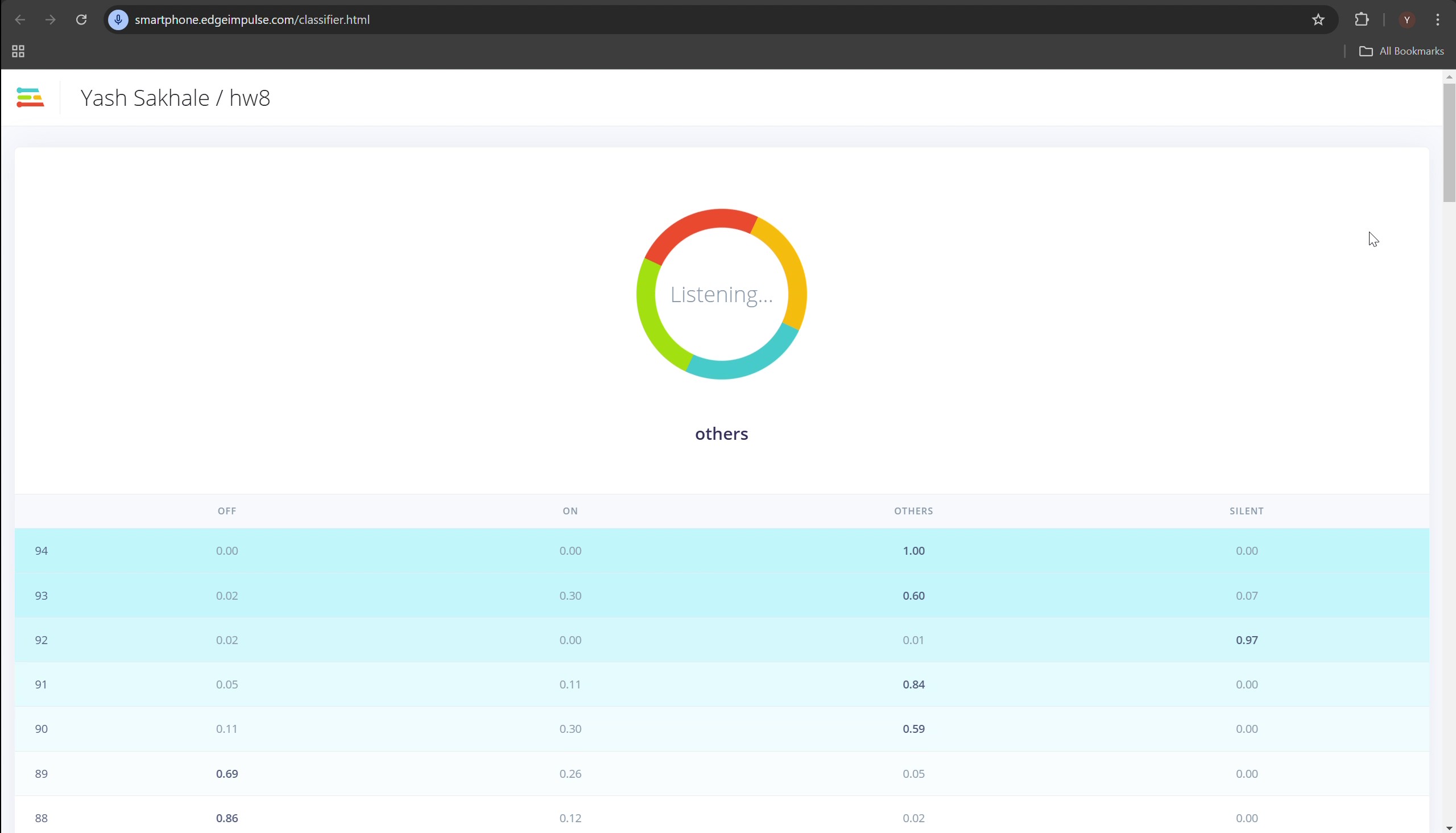

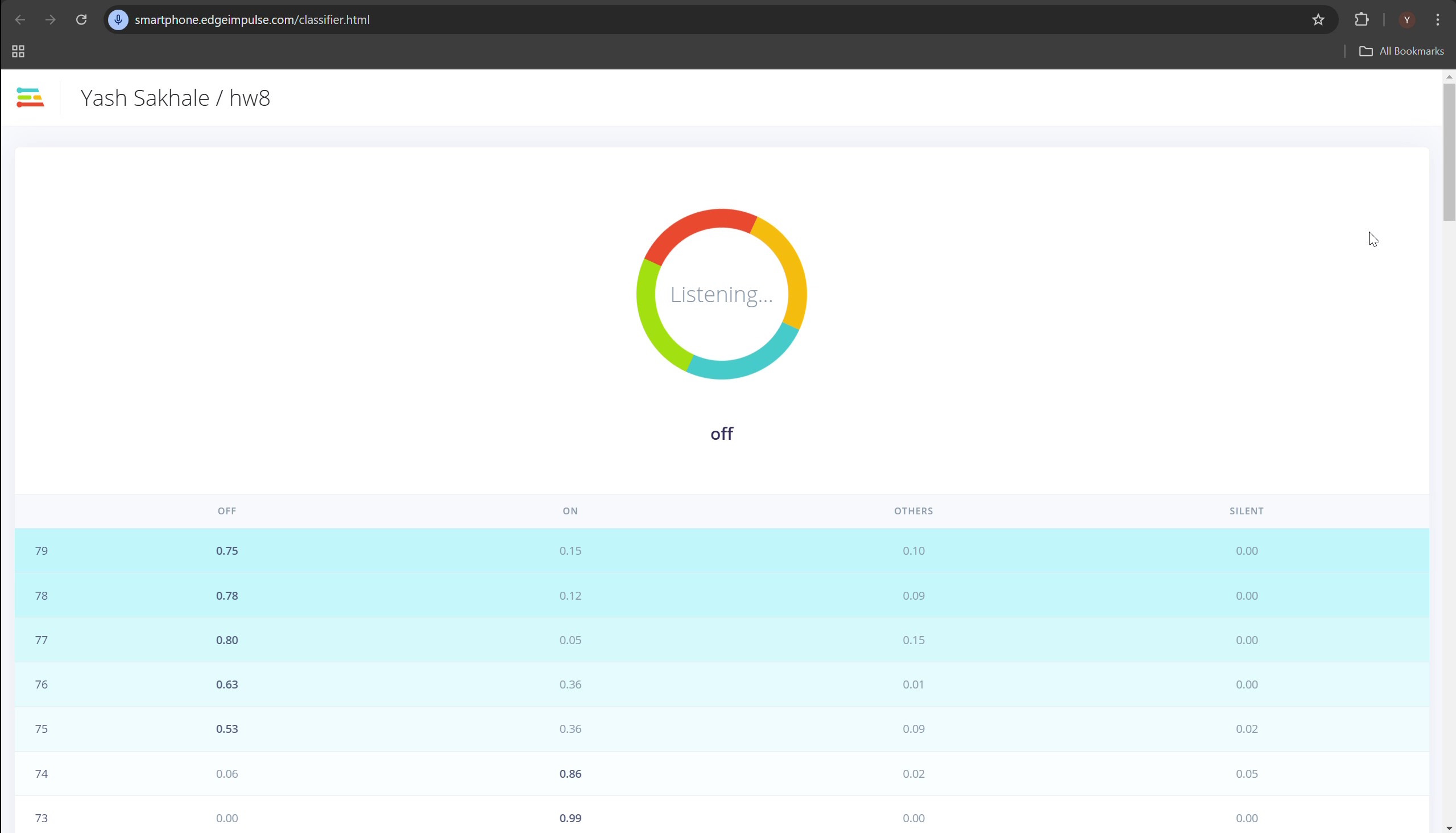

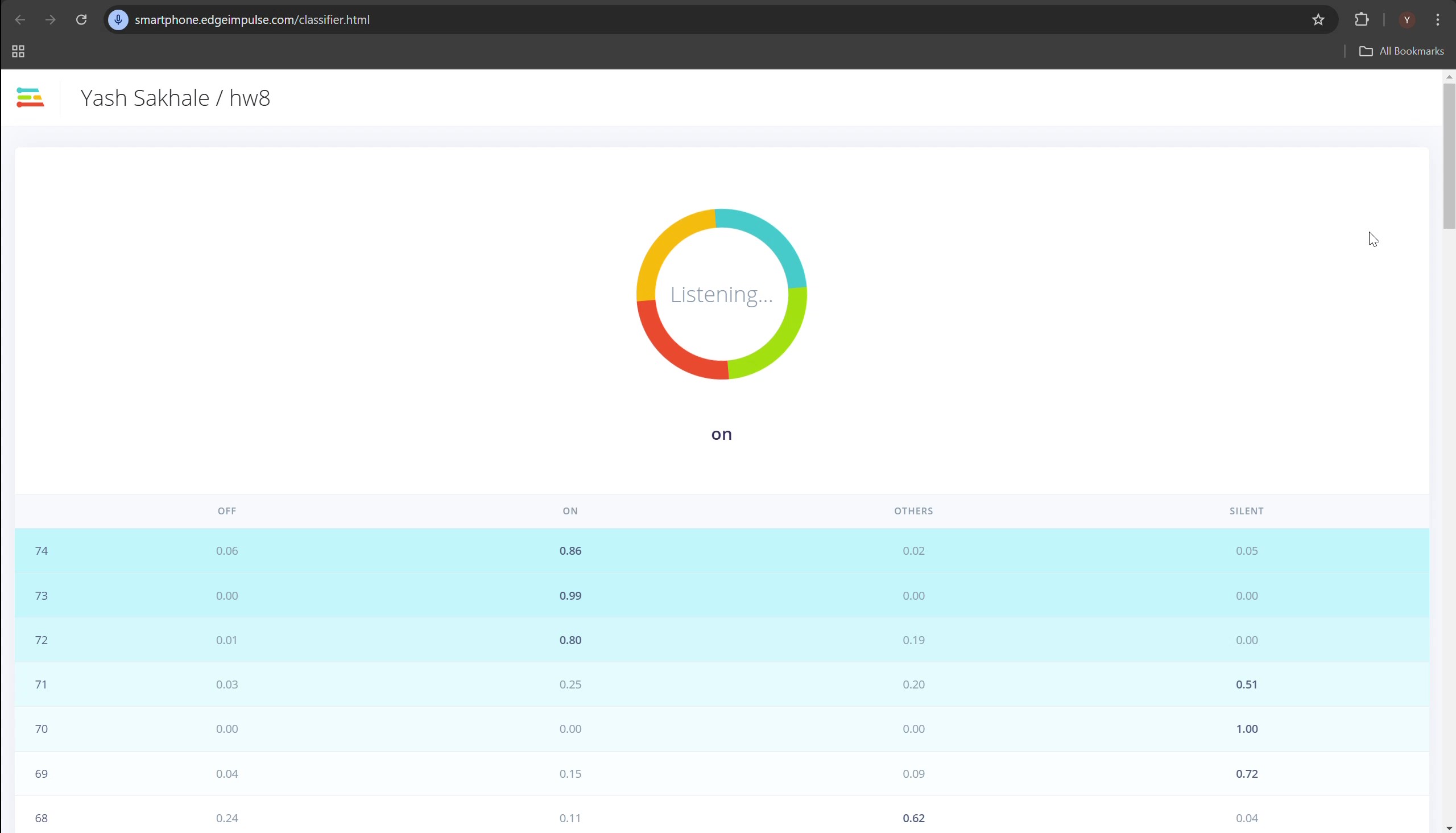

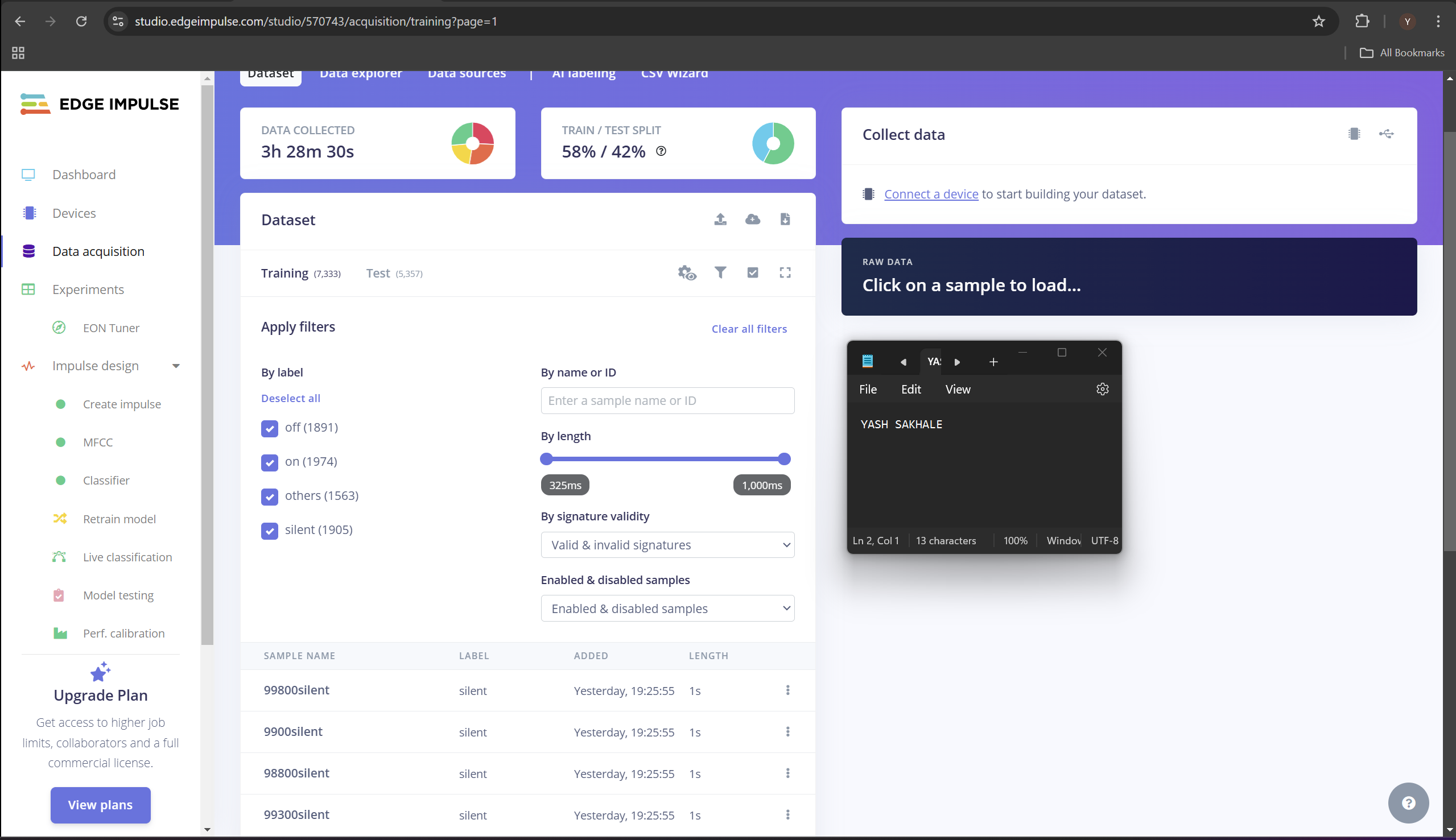

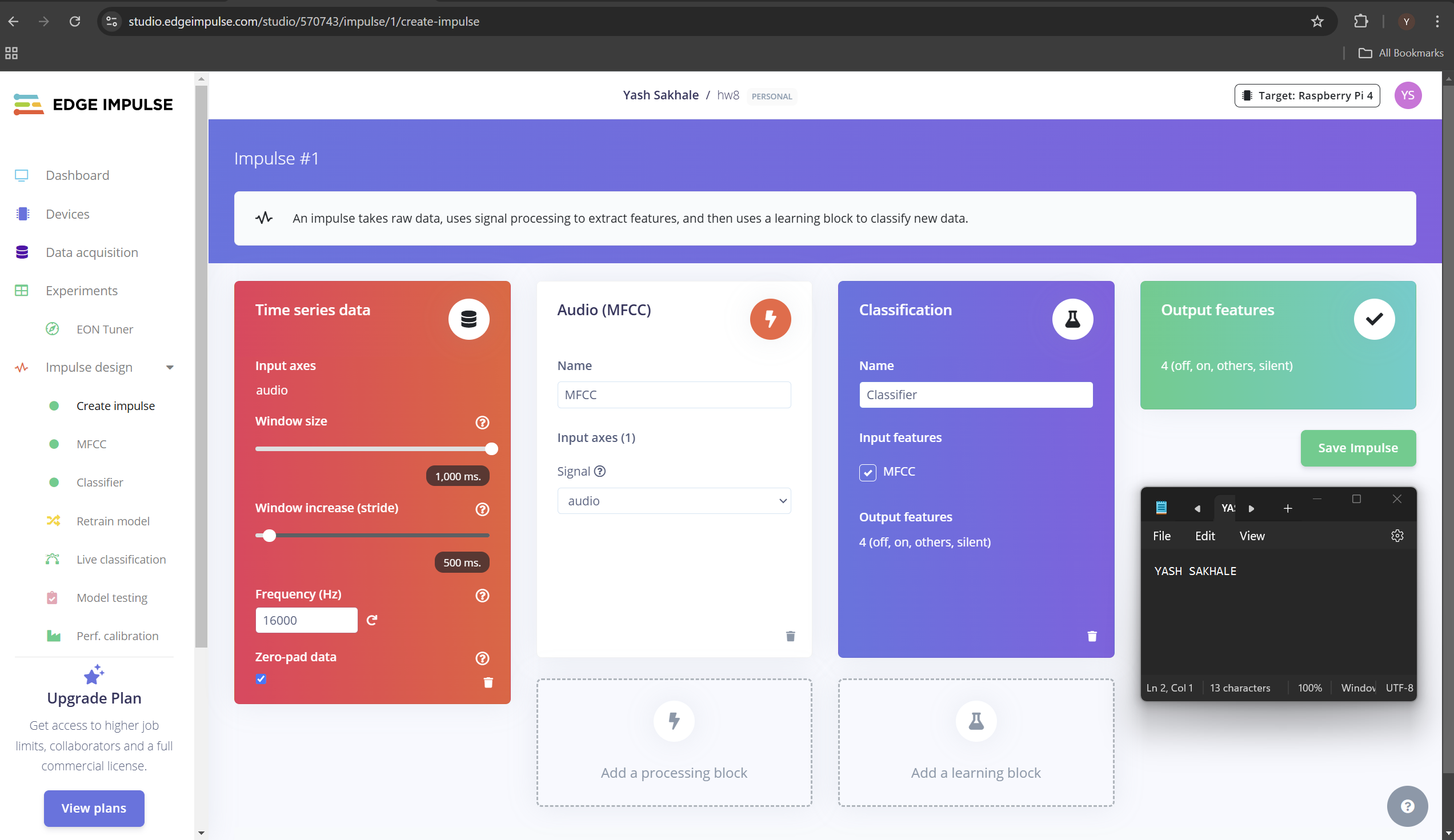

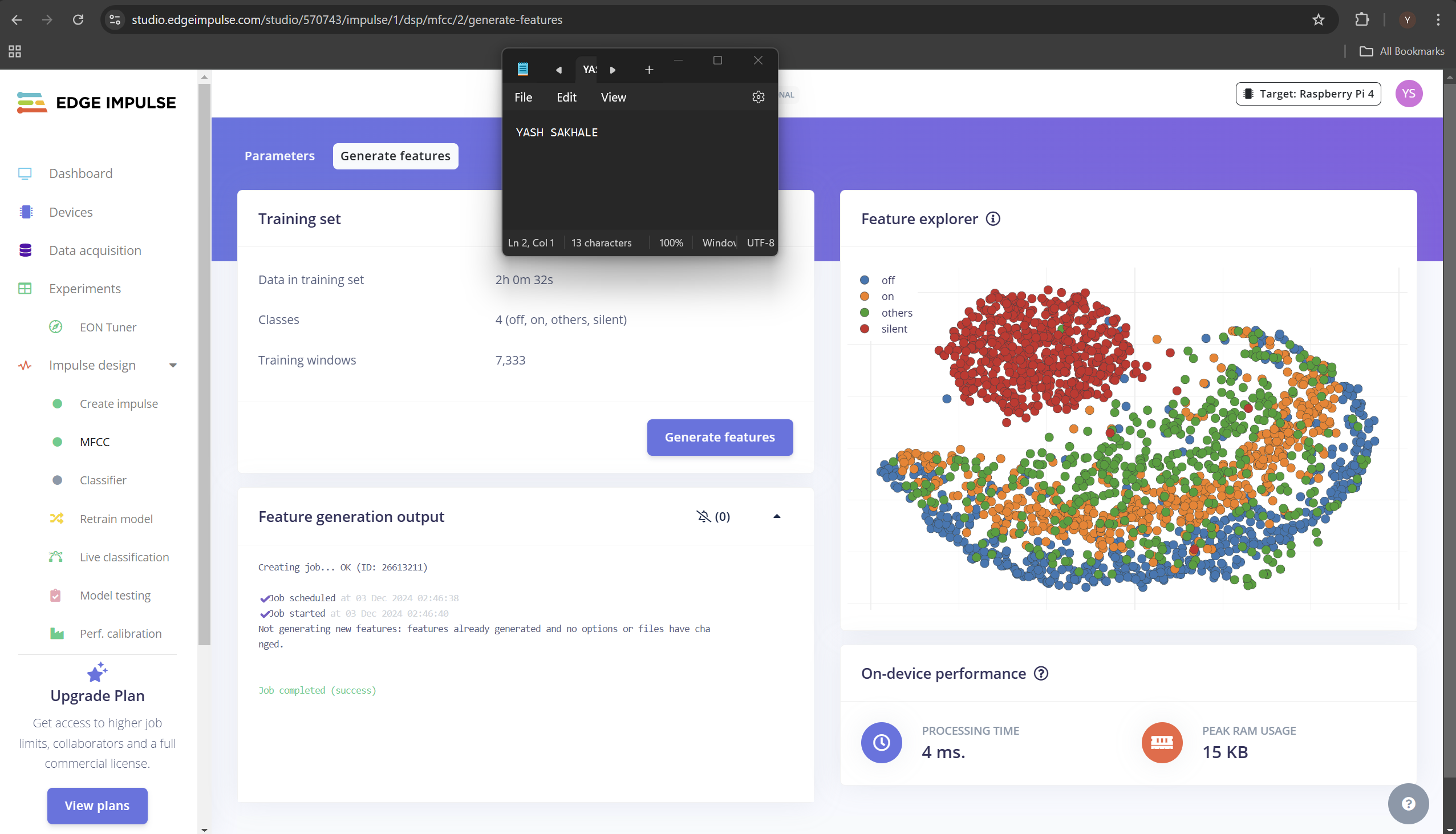

Using the Edge Impulse platform, I developed a real-time speech detection model optimized for devices like the Arduino Nano BLE Sense and Raspberry Pi Pico. After collecting diverse audio samples, I extracted MFCC features and trained a compact neural network tailored for memory-constrained environments. Preprocessing and tuning were performed to ensure robustness in noisy settings.

Key Results:Achieved 94.5% accuracy and 97% recall on test dataset Inference time under 100 ms on edge hardware Robust performance across varied environmental noise conditions

Discussion

This project validates the feasibility of accurate and efficient on-device speech detection. It eliminates cloud dependence, reducing latency and enhancing user privacy. Documentation visuals (see Figures 1-3) explain the model pipeline and interface. I led all stages from data prep to deployment, demonstrating end-to-end ML development for embedded systems.