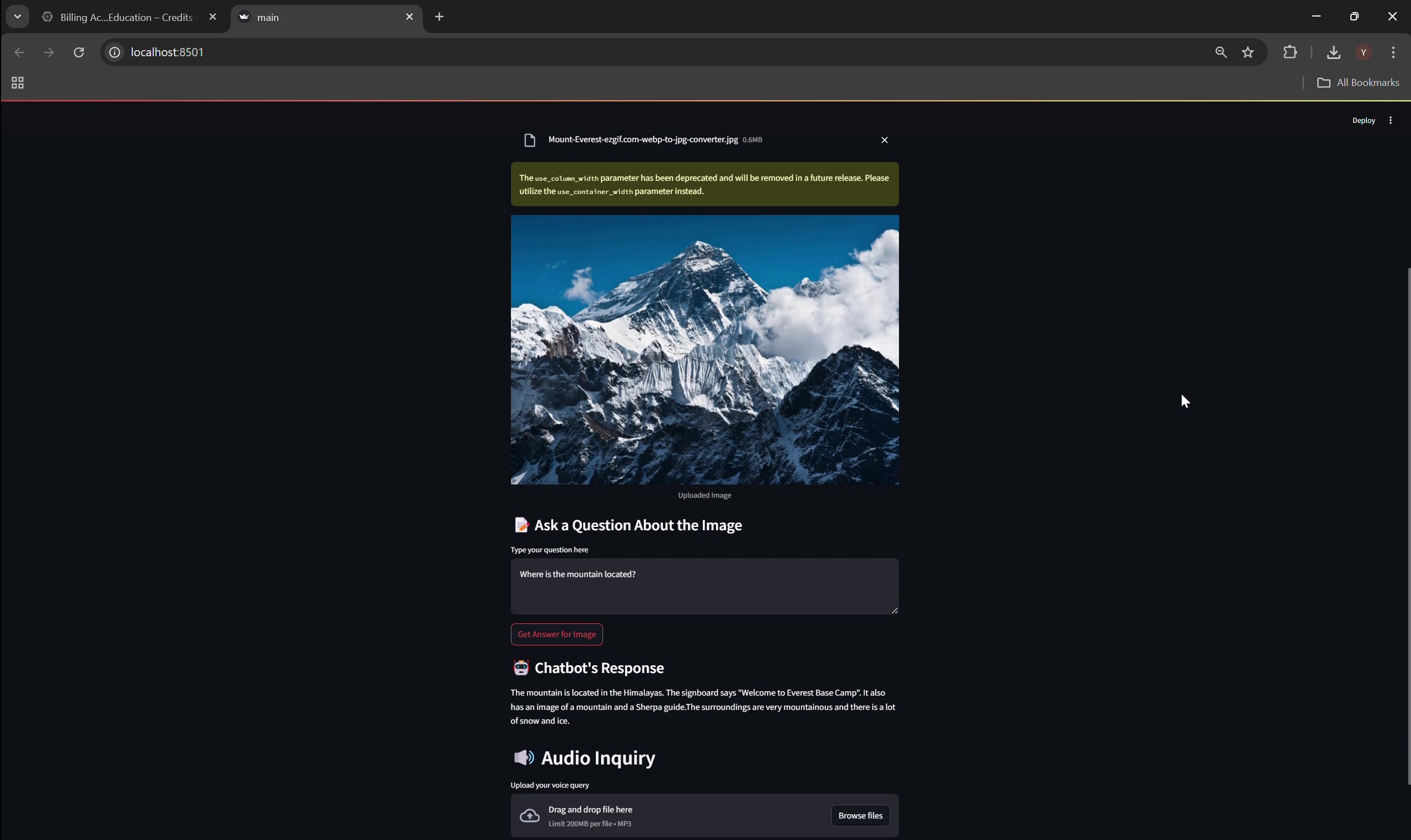

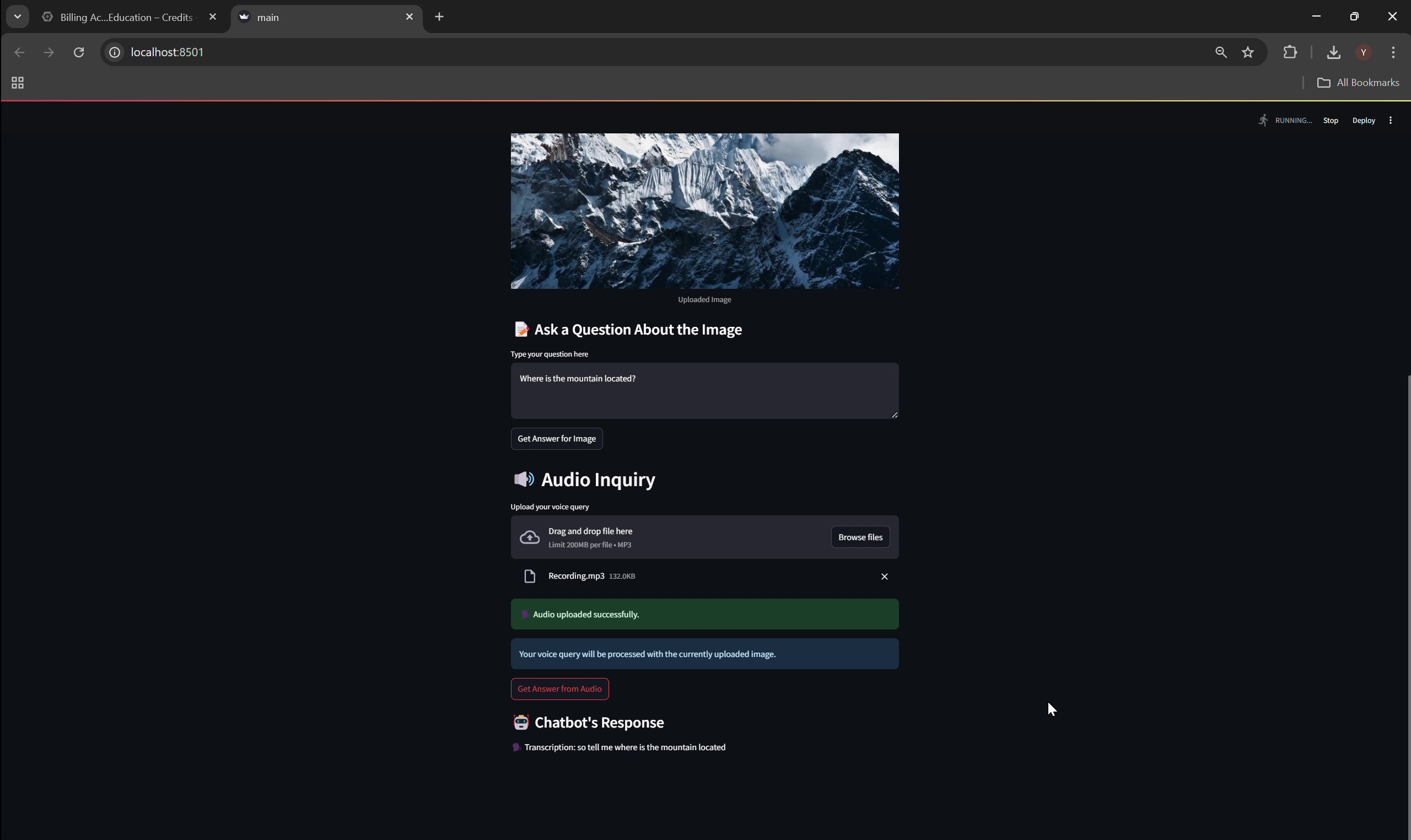

Demonstration

Introduction

Traditional chatbots are limited in handling multimodal inputs such as audio and images. This restricts their use in natural, human-like interaction scenarios. With increasing demand for accessible, AI-driven systems in education, healthcare, and customer service, this project introduces OmniViz AI-a real-time chatbot capable of understanding and responding to text, audio, and image queries using advanced multimodal AI models.

Methods

The chatbot is deployed on Google Cloud Platform (GCP), integrating:

- Vertex AI Gemini model for multimodal reasoning

- GCP Speech-to-Text API for transcribing audio to text

- An image upload interface to support vision-based queries

All API calls are optimized with batch processing, request pipelining, and parallel data handling. The system is containerized and deployed via GCP Cloud Run for scalability and reliability.

Results:25% reduction in response latency compared to prototype Real-time handling of audio, image, and text Tested use cases: visual Q&A, voice interaction, hybrid input

Discussion

OmniViz AI unifies speech, vision, and text reasoning into a single accessible interface. The use of Gemini enables contextual understanding and richer interaction, ideal for applications in accessibility tools, digital tutoring, and AI customer support. The seamless UI and responsive backend ensure high user engagement.

I led the design and development of the architecture, integrated and tuned GCP services, built latency test scripts, and evaluated system performance across modalities.